Cloud vs Local AI: Real Cost Analysis for Spanish SMEs in 2026

Cloud vs Local AI: Real Cost Analysis for Spanish SMEs in 2026

You are running a business in Spain. You want AI to handle customer emails, draft documents, summarize reports, or answer internal questions. The first decision you face: pay a cloud provider per query, or buy a small machine and run models locally?

This article gives you the real numbers so you can decide for yourself.

gantt

title Cloud vs Local AI: 12-Month Cost Trajectory (EUR)

dateFormat YYYY-MM

axisFormat %b

section Cloud APIs

Month 1 — EUR 210 :done, cloud1, 2026-01, 30d

Month 2 — EUR 420 :done, cloud2, after cloud1, 30d

Month 3 — EUR 630 :done, cloud3, after cloud2, 30d

Month 4 — EUR 840 :active, cloud4, after cloud3, 30d

Month 5 — EUR 1050 :cloud5, after cloud4, 30d

Month 6 — EUR 1260 :cloud6, after cloud5, 30d

Month 7 — EUR 1470 :cloud7, after cloud6, 30d

Month 8 — EUR 1680 :cloud8, after cloud7, 30d

Month 9 — EUR 1890 :cloud9, after cloud8, 30d

Month 10 — EUR 2100 :cloud10, after cloud9, 30d

Month 11 — EUR 2310 :cloud11, after cloud10, 30d

Month 12 — EUR 2520 :crit, cloud12, after cloud11, 30d

section Local Hardware

Hardware Purchase EUR 920 :done, hw, 2026-01, 30d

Electricity only EUR 1.60/mo :done, elec, after hw, 330d

section Break-Even

Break-even at ~4.4 months :milestone, m1, 2026-05, 0dCloud API Pricing in April 2026

Every major AI provider charges per token — roughly per word processed. Here is what the main providers charge today for their flagship models:

| Provider | Model | Input (per 1M tokens) | Output (per 1M tokens) |

|---|---|---|---|

| OpenAI | GPT-4o | $2.50 | $10.00 |

| Anthropic | Claude 3.5 Sonnet | $3.00 | $15.00 |

| Gemini 1.5 Pro | $1.25 | $5.00 |

A typical business query — a customer email response, a document summary, or a data extraction task — consumes roughly 1,000 input tokens and 500 output tokens. That means each query costs approximately:

- OpenAI GPT-4o: $0.0075 per query (~EUR 0.007)

- Anthropic Claude 3.5 Sonnet: $0.0105 per query (~EUR 0.010)

- Google Gemini 1.5 Pro: $0.0038 per query (~EUR 0.003)

These numbers seem tiny. But they add up fast.

The Local Hardware Alternative

A Mac Mini M4 with 24GB unified memory costs EUR 920 one-time. It runs open-source models like Llama 3.1 8B, Mistral 7B, or Phi-3 locally with zero per-query cost. The only ongoing expense is electricity: roughly EUR 19 per year running 8 hours a day.

It handles the same tasks — email drafting, document summarization, data extraction, internal Q&A — at zero marginal cost per query.

Monthly Cost Comparison by Usage Level

Here is where the math gets interesting. We calculated monthly costs for three realistic usage scenarios using a blended average across the three cloud providers (~EUR 0.007 per query):

| Usage Level | Cloud APIs (monthly) | Mac Mini M4 (monthly) | Annual Cloud | Annual Local |

|---|---|---|---|---|

| 1,000 queries/day | EUR 210 | EUR 1.60 | EUR 2,520 | EUR 19 |

| 5,000 queries/day | EUR 1,050 | EUR 1.60 | EUR 12,600 | EUR 19 |

| 10,000 queries/day | EUR 2,100 | EUR 1.60 | EUR 25,200 | EUR 19 |

The local cost stays flat. The cloud cost scales linearly with every additional query.

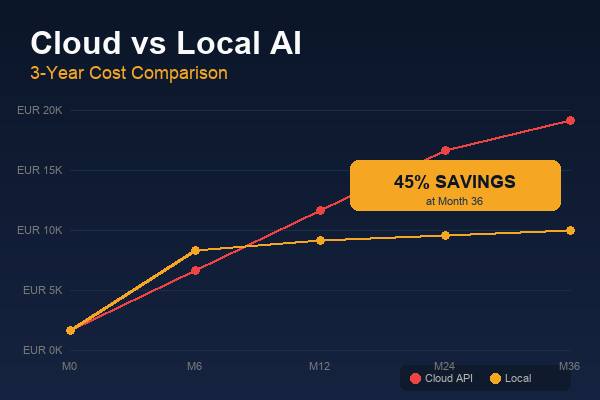

Break-Even Analysis

The Mac Mini M4 costs EUR 920 upfront. Here is how quickly it pays for itself:

- At 1,000 queries/day: Break-even in 4.4 months

- At 5,000 queries/day: Break-even in 26 days

- At 10,000 queries/day: Break-even in 13 days

Even at modest usage, the hardware pays for itself within a single quarter. At higher volumes, the savings become enormous — EUR 25,000+ per year compared to cloud APIs.

The Privacy Advantage: GDPR and Data Sovereignty

For a Spanish SME, cost is not the only factor. Under GDPR, every time you send customer data to a cloud API, you need:

- A Data Processing Agreement with the provider

- A legal basis for the data transfer (often outside the EU)

- Documentation of what data is sent and how it is processed

- The ability to delete data on request from the provider’s servers

With local AI, your data never leaves your building. There is no transfer, no third-party processor, no compliance overhead. For businesses handling client contracts, medical records, legal documents, or financial data, this is not just convenient — it is a competitive advantage.

The Honest Trade-Off: Quality

Here is where we need to be straight with you. Cloud models like GPT-4o and Claude are smarter than local models running on a Mac Mini. They handle nuance better, reason more deeply, and produce more polished output.

But here is the reality: 80% of SME AI tasks do not need frontier intelligence. Answering FAQs, extracting data from invoices, drafting standard emails, summarizing meeting notes, classifying support tickets — a well-configured local model handles these just as well as a cloud model at a fraction of the cost.

For the remaining 20% — complex analysis, creative content, or tasks requiring the latest knowledge — you can use cloud APIs selectively. A hybrid approach gives you the best of both worlds: local for volume, cloud for complexity.

Our Recommendation

For most Spanish SMEs processing between 1,000 and 10,000 AI queries per day:

- Start with local hardware — a Mac Mini M4 handles the majority of tasks

- Use cloud APIs sparingly for tasks that genuinely need frontier models

- Track your actual usage to know exactly where your money goes

- Factor in GDPR compliance costs — they are real and often overlooked

The break-even period is short. The privacy benefits are immediate. And the peace of mind of not watching a cloud bill grow every month is worth something too.

Related reading

- Cloud vs Local AI Cost Benchmarks

- Best Local LLM Models for Q2 2026: Practical Comparison for SMEs

- DeepSeek R1: The Best Open-Source Reasoning Model You Can Run Locally

Related Resources

- Our Hardware Products — Pre-configured edge AI nodes for Spanish SMEs

- AI Model Comparison — Full comparison of local vs cloud models

- Tools and Resources — Deployment guides, calculators, and templates

- Edge AI vs Cloud: 3-Year TCO — Extended total cost of ownership analysis

- How to Implement AI in a Spanish Company — Step-by-step deployment guide.

Sources: OpenAI Pricing · Ollama · Apple Mac Mini M4

For more information on how VORLUX AI can help your business with local AI deployments, visit vorluxai.com or schedule a free consultation.